Abstract:

Evaluation and impact are two words many of us use daily. But what do they mean and how can they improve our work? As a funder, evaluation plans are an integral component of all proposals we review. This article explains why evaluations are so important to funders and what we look for in an evaluation; it will provide perspective on opportunities to shift your mindset to adopt a culture of evaluation in your organization.

Keywords:

evaluation, impact, philanthropy, funding, equitable evaluation

When I was a doctoral student, I was invited to attend a Grantmakers in Aging conference. What struck me most were the number of sessions focused on evaluation. Topics included what to look for in a strong evaluation, why evaluation is so important, and how to share lessons learned with trustees and partners. Prior to that, I hadn’t given much thought to the relevance and importance of evaluation to the funder, and how they might use that information. I’ve been intrigued to learn about evaluation and impact from a funder’s perspective ever since, and am glad to work at a foundation where I can further reflect upon the use of evaluative information and how it benefits collaborative efforts to improve quality of life for all of us as we age.

A Bit of Background: Focus on Evaluation

Writers of grant proposals for funding are routinely asked to incorporate an evaluation of the work into the proposal. Whether submitting a proposal for direct service work, research, advocacy, or any other type of support, some element of evaluation is unavoidable.

Usually, when people hear evaluation, they think of the standard outcome evaluation, which focuses on determining whether a program improves one or more targeted outcomes for those served. But not all evaluations look or feel the same. The textbook definition of evaluation is, “The systematic collection of information about the activities, characteristics, and outcomes of programs to make judgments about the program, improve program effectiveness, and/or inform decisions about future program development” (Patton & Campbell-Patton, 2008, p. 39).

There are several types of evaluation beyond outcome evaluation, including process and implementation evaluation, and formative and summative evaluation. Outcome evaluation is generally used when an applicant is testing the effects of programs that are innovative, replicable, and shown to be feasible.

Process evaluations focus on how a program operates, incorporating descriptive information about clients and staff, the nature of services offered, methods of delivery, and patterns of service use. Process evaluation generates a blueprint of a program in action. Effective process evaluations allow applicants to explain how funds are used, provide a guide to others wishing to replicate the work, and describe the program or intervention. Implementation evaluation focuses on the practical lessons that emerge when activating a new project. A project rarely goes off without a hitch; lessons learned during implementation help organizations identify whether an approach may need to be modified and, if so, how to modify the approach effectively (RRF Foundation for Aging, n.d.).

The type of evaluation used depends upon the project or the type of work being conducted. It is important to recognize that a strong evaluation does not have to be time-consuming or expensive. Evaluations can run the gamut of rigor, with more rigorous evaluations (implemented through experimental research designs) being more expensive; quasi-experimental and non-experimental designs (such as observational or pre/post-tests) also can be of benefit in understanding the plans, process, and outcomes of the work.

A Bit of Background: Focus on Impact

I see evaluation as a tool to help understand whether the work aids progress toward social impact, health equity, social justice, and/or social change. As a graduate student, many of the traditional evaluation frameworks I was taught didn’t resonate with me. Sure, they helped us understand whether the objectives were being met, but if the objectives weren’t well written, the evaluation would have no chance of helping to determine impact. When I started work as a researcher in the field, in addition to applying traditional evaluation frameworks, I focused on the role a colleague had suggested, that of a “creative evaluator.” With this concept in mind, I strived to think creatively, to figure out ways to quantitatively or qualitatively understand whether the work my team was doing was progressing toward that elusive term, “impact.” Afterall, if our work wasn’t impactful, why were we doing it?

When I started working for a foundation, I brought this concept of creative evaluation with me, and brainstormed with my team to understand why we ask grantee partners to evaluate their work and how we use that information. In accordance with best practices and “trust-based philanthropy,” our goal as a funder is to approach relationships with grantee partners from “a place of trust and collaboration rather than compliance and control” (Trust-Based Philanthropy Project, n.d.). One of the practices of trust-based philanthropy is streamlined reporting, which means not requiring grantee partners to submit cumbersome reports that will not be used in any significant way by the funder. So, why request that grantee partners complete evaluations?

‘A strong evaluation does not have to be time-consuming or expensive.’

Our job is to identify and support work that has the greatest potential for high impact within the foundation’s interests, while advancing diversity, equity, and inclusion efforts. We are a mid-size funder with a relatively fixed amount of funding we can dedicate each year to our mission of improving quality of life for all of us as we age. We are constantly trying to learn and understand what elements will help us achieve our mission, and without evaluative information we have no way of knowing the impact of our philanthropic dollars.

As explained by the Council on Foundations (n.d.), evaluations help foundations understand the quality or impact of funded programs, plan and implement new programs, make future grant decisions, and demonstrate accountability to those managing the foundation (i.e., the public trust, or trustees of the board).

At RRF Foundation for Aging, we promote evaluation as part of every grant to:

- Encourage applicants and grantees to become more effective learning organizations by gathering and systematically analyzing important client and program information about the nature, reach, quality, and efficiency of the services they provide;

- Enable the foundation to better understand the value of the investments we make and the lessons that funded projects can teach us about how to invest our grant dollars more effectively in the future; and

- Add to knowledge in the field about best practices in services for older adults by supporting, when appropriate, rigorous experimental or quasi-experimental outcome studies.

Our hope is to foster a culture of evaluation in the organizations with which we partner. Organizations with a culture of evaluation deliberately and actively seek evidence to better design and deliver programs (Stewart, 2014).

Important Elements to Include in Any Evaluation

When planning an evaluation, certain elements need to be considered and incorporated into the plan. First, evaluation must be part of the conversation from the beginning. It is difficult to begin thinking through evaluation after a program has already been planned, or worse yet, already implemented. If bringing in an external evaluator, it is important to clearly articulate program goals and objectives to the evaluator so all are on the same page regarding what is being evaluated and expected outcomes.

A strong evaluation will start with the basics, asking key questions such as, what aspects of the program are most effective? What do clients or patients like best about the program? How many sessions are needed to achieve results? Next, it is crucial to ask whether the question really needs an answer, and whether it is ethical and feasible to measure the result.

When planning an evaluation I always recommend starting with a logic model—a logical depiction of the inputs, activities, outputs, and outcomes of a project—and working backward. In evaluation one must define the desired outcome first, and then think about how to measure it. Next, one must determine what indicator will be used to assess the outcome, where that indicator can be found, and how to collect and store information about the indicator.

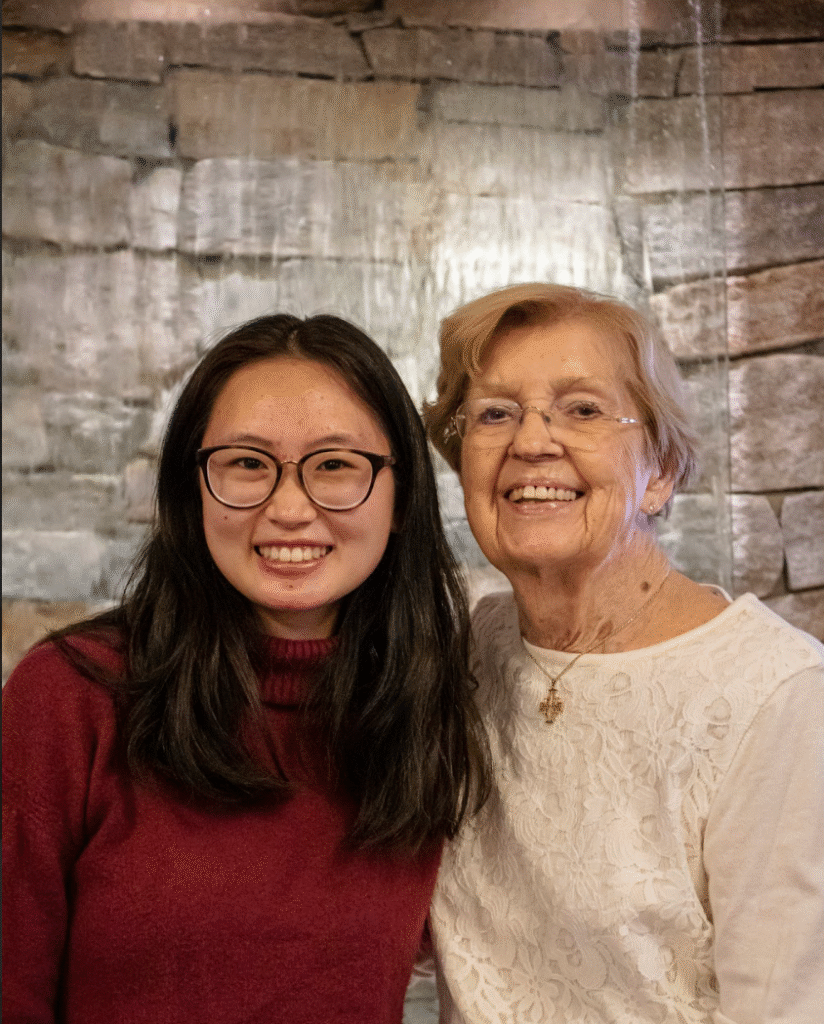

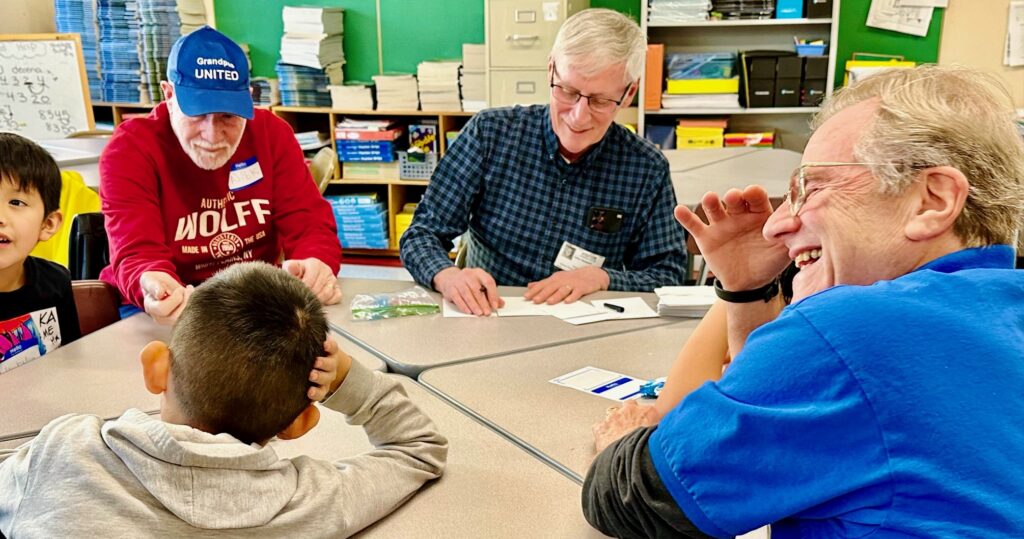

For instance, when planning an intergenerational program or intervention, one starts with the desired primary outcome—perhaps fostering intergenerational connections or reducing loneliness. Although there could be more than one outcome, it is key to be intentional about the desired primary outcome. Next, one must determine which indicator to use to assess the outcome, where that indicator can be found, and how to collect and store information about it.

In the example above, if implementing a program to reduce loneliness, one must determine how to conceptualize loneliness—is it a self-reported measure of perceived loneliness? If that’s the case, one might opt to use the DeJong Gierveld Loneliness Scale, which measures “emotional” and “social” loneliness, or The Campaign to End Loneliness Measurement tool designed to understand a person’s experience of loneliness (Campaign to End Loneliness, n.d.). There are many measurement tools for constructs in the social sciences available. The Foundation for Social Connection has created an inventory here. Also, for a comprehensive database of physical, mental, and social health measures and other patient-reported outcome measures, there’s the HealthMeasures website.

‘We frequently see proposals submitted that focus on ideal, not actual populations.’

After a measure has been selected, evaluators must define who will collect the data, how they will collect it, where it will be stored, and who will analyze it. Data can be collected via interview or survey format. In either case, it is important to consider how to make it most accessible for all. Some examples include using large font for surveys, ensuring surveys are available in multiple languages, or making non-program staff available to conduct the survey as an interview format.

An additional element to consider is the sample—who will be included, and do they represent the population to be targeted by a program or intervention? We frequently see proposals submitted that focus on ideals, not actual populations. For instance, proposals that plan to reduce loneliness using a pre-post evaluation of a sample with low loneliness rates at baseline.

Considerations for Equitable Evaluation

It also is critical for evaluation to be grounded in advancing equity, meaning the way an applicant conceptualizes outcomes, chooses variables of interest, and designs the evaluation process must draw from the needs and expertise of diverse community stakeholders (Equitable Evaluation Initiative, n.d.). Evaluation should be designed and implemented with multicultural validity, and it should consider the effects of a strategy on different populations and on the underlying systemic drivers of inequity. It is important to remember that if we are not designing intentionally for equity, we are exacerbating it.

Additional questions regarding equity that evaluations should consider include: Who benefits most and least from the program/intervention? What assumptions were made? What might be some intended or unintended consequences? And how well does this program/intervention reflect the values, needs, and goals of the community? Engage stakeholders in the evaluation process, and don’t shy away from their constructive criticism and concerns. From personal experience, I’ve learned it will only strengthen the work.

What Makes a Project Have Potential for Impact?

Most foundations want to spur social change; for example, at RRF Foundation for Aging, our mission is to improve quality of life for older adults. Our board often questions how we know the work we fund is helping to achieve our mission. Unfortunately, individual evaluations of a diverse group of projects will not provide sufficient information for us to fully gauge the answer to that question. Therefore, when proposals come in, beyond reviewing for strong evaluations, we also assess how they fit into the ecosystem of our other work and ask whether a proposal has a high potential for impact.

Impact is one of the core values at RRF Foundation for Aging. We recognize impact is accomplished by “investing in effective organizations, sound program models, and strong policies.” To live this value, RRF takes an approach as a learning organization with the goal of identifying the conditions under which we can award new grants that will have the greatest chance of impact. Identifying essential criteria allows us to improve our selection of projects for funding.

We assess for impact on individuals, on organizations, on communities, or on the field, depending upon the type of project. For example, in direct service projects, the work needs to have significant impact on the parties it serves, or on the ability of the organization to provide service.

We’ve learned that successful direct service projects are designed in response to a specific, demonstrated interest, need, and/or demand from the target population; they are designed and implemented in collaboration with other partnering organizations; they do not duplicate existing projects or parallel efforts; and they incorporate appropriate DEI considerations and cultural competencies.

When it comes to research, we note where it will take the field and what will result. Effective research projects focus on applied research with an actionable plan to apply findings to current practice. They have rigorous research methods, collaborative investigative teams, and build upon the most current knowledge.

Advocacy projects that achieve impact tend to target a specific issue, such as rising debt in older adults, take advantage of momentum and good timing, and involve collaboration with an appropriate mix of partners. Effective training projects start with the ability to demonstrate evidence of trainee desire and demand, involve interactive teaching methods to maximize learning, use technology in appropriate and seamless ways, and incorporate strategies for trainees to deepen their ability to apply what they’ve learned in the future.

Conclusion

Evaluation should not be considered an academic exercise. It is a necessary process to help achieve impact. While not all projects need an elaborate randomized controlled trial, it is important to obtain some level of data so that we can learn what works and where there are opportunities for improvement. At RRF Foundation for Aging, our hope is that implementing a brief reporting process that reflects program evaluation will help us understand a project’s impact and lessons learned, while also helping the project team reflect on progress made during the grant period. My hope is that organizations and researchers will shift their mindsets toward a culture of evaluation, in which qualitative and quantitative data are collected and organizations consider a mix of evidence to make informed decisions and improve their work.

Amy R. Eisenstein, PhD, is senior program officer and director of Research and Evaluation at the RRF Foundation for Aging in Chicago, IL.

Photo credit: Shutterstock/garagestock

References

Campaign to End Loneliness. (n.d.) Measuring your impact on loneliness in later life. https://www.campaigntoendloneliness.org/wp-content/uploads/Loneliness-Measurement-Guidance1.pdf

Council on Foundations. (n.d.). Grant evaluation. https://cof.org/topic/grant-evaluation

Equitable Evaluation Initiative. (2024). Elements of the EEF. https://www.equitableeval.org/post/eef-expansion-eef-elements

Patton, M. Q., & Campbell-Patton, C. E. (2008). Utilization-focused evaluation. Sage.

Stewart, J. (2014). Developing a culture of evaluation and research. Australian Institute of Family Studies. https://aifs.gov.au/resources/practice-guides/developing-culture-evaluation-and-research

RRF Foundation for Aging. (n.d.). Evaluation guidelines. http://www.rrf.org/apply-for-a-grant/application-info-tips/evaluation-guidelines/

Trust-Based Philanthropy Project. (2023). What is trust-based philanthropy? https://www.trustbasedphilanthropy.org/